guide labs debuts a new kind of Guide Labs has introduced a groundbreaking interpretable large language model (LLM) called Steerling-8B, which is open-sourced and features an innovative architecture aimed at enhancing the transparency of its actions.

guide labs debuts a new kind of

Introduction to Steerling-8B

In the rapidly evolving landscape of artificial intelligence, the demand for interpretable models has surged. As organizations increasingly rely on AI for decision-making, understanding how these systems arrive at their conclusions becomes paramount. Guide Labs, a company dedicated to advancing AI technologies, has responded to this need by unveiling Steerling-8B, an 8 billion parameter LLM designed with interpretability at its core.

Key Features of Steerling-8B

Innovative Architecture

Steerling-8B distinguishes itself from traditional LLMs through its unique architecture. While many existing models prioritize performance and scale, often at the expense of transparency, Steerling-8B integrates mechanisms that allow users to trace the model’s decision-making process. This architecture is not merely an incremental improvement; it represents a paradigm shift in how LLMs can be designed to be more user-friendly and accountable.

Open-Sourcing for Community Engagement

By open-sourcing Steerling-8B, Guide Labs aims to foster collaboration within the AI community. Open-source models allow researchers, developers, and organizations to examine the underlying code, test its capabilities, and contribute to its evolution. This approach not only enhances the model’s robustness through community feedback but also promotes transparency in AI development.

Implications of Interpretable LLMs

Enhancing Trust in AI

One of the most significant implications of interpretable LLMs like Steerling-8B is the potential to enhance trust in AI systems. As AI becomes increasingly integrated into various sectors, including healthcare, finance, and law enforcement, stakeholders are concerned about the “black box” nature of many existing models. By providing insights into how decisions are made, Steerling-8B can help alleviate fears surrounding AI’s unpredictability.

Applications Across Industries

The versatility of Steerling-8B opens up numerous applications across different industries:

- Healthcare: In medical diagnostics, interpretable models can assist healthcare professionals by explaining the rationale behind treatment recommendations, thus improving patient outcomes.

- Finance: In financial services, Steerling-8B can help institutions comply with regulatory requirements by providing clear explanations for credit decisions and risk assessments.

- Legal: In the legal field, the model can aid in case analysis by elucidating the reasoning behind legal recommendations, making it easier for lawyers to understand and trust AI-generated insights.

Technical Specifications

Parameter Count and Training

Steerling-8B boasts 8 billion parameters, a size that positions it as a mid-range model in the landscape of large language models. This parameter count strikes a balance between performance and interpretability, allowing it to handle complex tasks while remaining accessible for analysis. The training process involved diverse datasets, ensuring that the model is well-rounded and capable of understanding various contexts.

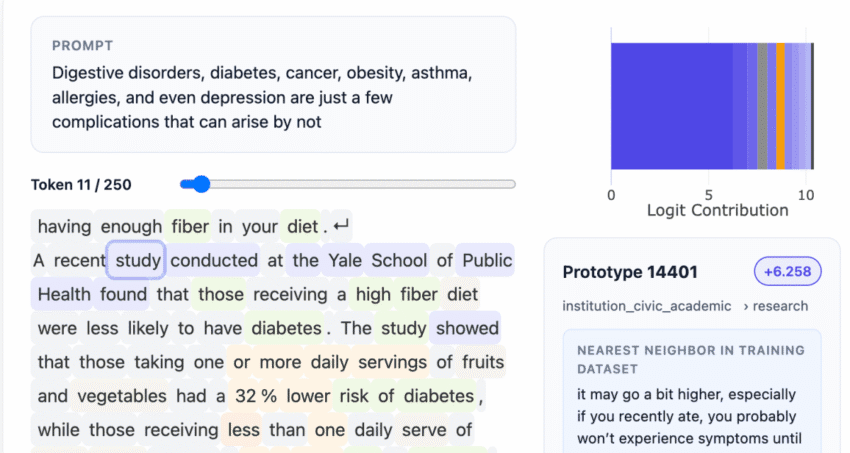

Interpretable Mechanisms

The architecture of Steerling-8B incorporates several interpretable mechanisms that allow users to understand the model’s reasoning. These mechanisms include:

- Attention Visualization: Users can visualize which parts of the input data the model focused on when generating a response, providing insights into its decision-making process.

- Feature Importance Scores: The model can assign importance scores to different features in the input data, helping users identify which elements influenced the output the most.

- Explanatory Outputs: Steerling-8B can generate explanations alongside its primary outputs, offering users a clearer understanding of the rationale behind its conclusions.

Stakeholder Reactions

Industry Experts

The introduction of Steerling-8B has garnered attention from industry experts who emphasize the importance of interpretability in AI. Many experts believe that as AI systems become more prevalent, the ability to explain their decisions will be crucial for widespread adoption. Dr. Emily Chen, a leading AI researcher, stated, “Steerling-8B represents a significant step forward in making AI more understandable. This model could set a new standard for how we approach AI transparency.”

Regulatory Bodies

Regulatory bodies are also closely monitoring developments in interpretable AI. With increasing scrutiny on AI systems, particularly in sensitive areas like finance and healthcare, the ability to provide clear explanations for decisions could help organizations meet compliance requirements. Regulatory expert Mark Thompson noted, “Models like Steerling-8B could play a critical role in helping companies navigate the complex landscape of AI regulations.”

Challenges and Considerations

Balancing Performance and Interpretability

While the benefits of interpretable LLMs are clear, there are inherent challenges in balancing performance and interpretability. Many high-performing models sacrifice transparency for accuracy, leading to a trade-off that can be difficult to navigate. Guide Labs has attempted to address this issue with Steerling-8B, but ongoing research will be necessary to refine this balance further.

Potential Misuse of Interpretability

Another consideration is the potential misuse of interpretable models. While transparency can foster trust, it can also be exploited by malicious actors. For instance, if users understand how a model makes decisions, they may attempt to manipulate inputs to achieve desired outputs. This underscores the importance of ethical guidelines and responsible AI practices as the technology continues to evolve.

The Future of Interpretable LLMs

Research and Development

The launch of Steerling-8B is likely to spur further research into interpretable AI. As more organizations recognize the value of transparency, we can expect to see an increase in the development of models that prioritize interpretability. This trend may lead to the emergence of new techniques and methodologies aimed at enhancing the explainability of AI systems.

Broader Adoption Across Sectors

As industries begin to adopt interpretable models like Steerling-8B, we may witness a shift in how AI is integrated into business processes. Companies may prioritize models that not only deliver high performance but also provide clear insights into their operations. This could lead to a more responsible and ethical approach to AI deployment, ultimately benefiting both businesses and consumers.

Conclusion

Guide Labs’ introduction of Steerling-8B marks a significant advancement in the field of interpretable AI. By combining a robust architecture with open-source accessibility, the model aims to enhance transparency and trust in AI systems. As industries increasingly adopt such technologies, the implications for decision-making, compliance, and ethical considerations will be profound. The journey toward fully interpretable AI is just beginning, and Steerling-8B is poised to play a pivotal role in shaping its future.

Source: Original report

Was this helpful?

Last Modified: February 23, 2026 at 11:40 pm

11 views