Big Data is not a new term for IT companies looking forward to ensuring quality and faster product releases. Big data supports a number of testing types that include performance testing, functional testing, database testing, etc. A large volume of structured and unstructured data is known as big data that may exist in various forms including files, images, videos, etc. When building a strategy for any business, market research and analysis are key elements to watch out for. Firms have now started shifting their focus from what they want to sell, to what customers actually need, which brings big data under the spotlight. Data collection is a time-consuming and tedious process that involves conducting surveys and obtaining feedback.

Key Components of Big Data Testing

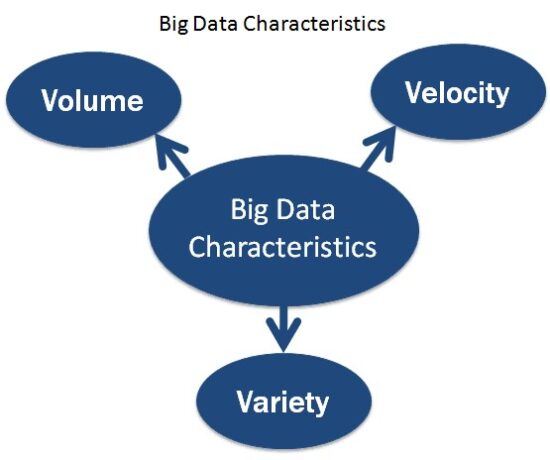

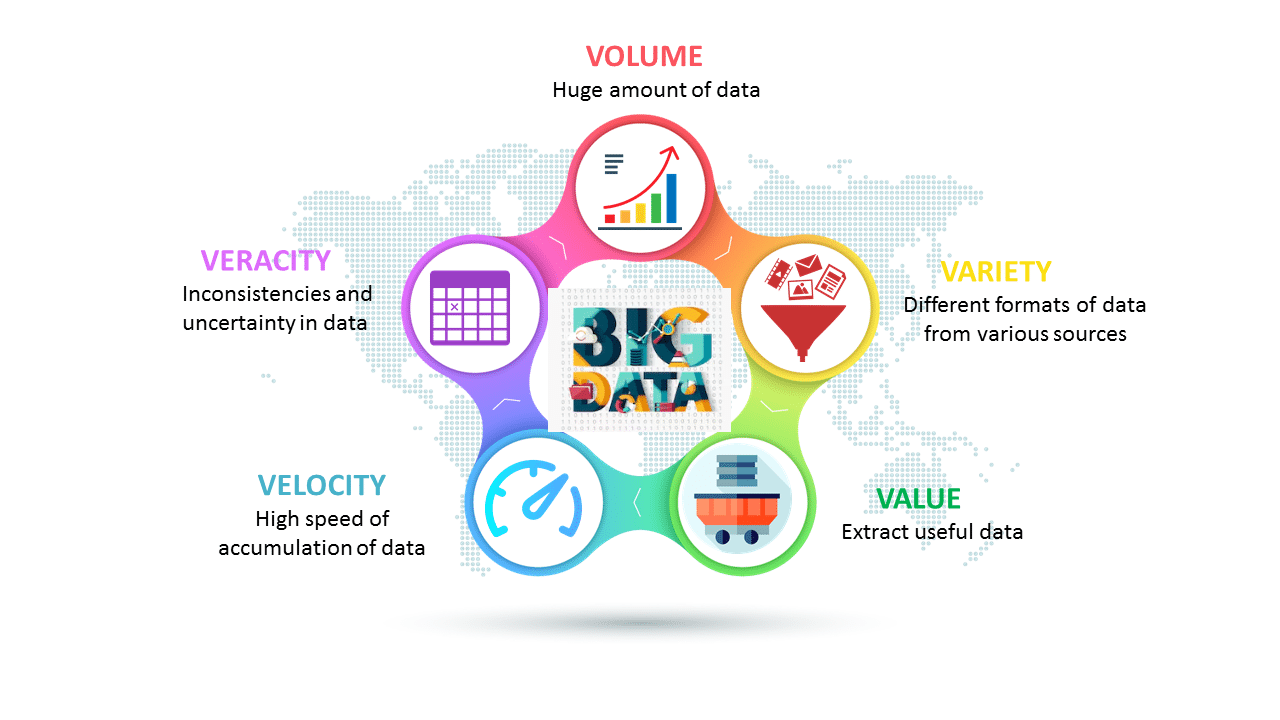

Big data is mainly defined in the form of volume, velocity and, variety. There were tremendous changes with respect to big data in 2019 and is said to grow and reach $247 billion by 2022.

If we talk about the key components of Big Data, huge information is portrayed by 3 Vs which are Volume, Velocity, and Variety. Such colossal information gathered needs to put away, investigated, and recovered deliberately. Customary databases are acceptable at working with organized information that can be put away in lines and sections. In any case, on the off chance that we have unstructured information that doesn’t follow a structure, at that point utilizing a social database isn’t be the correct decision.

The size of the huge information, the volume of information that gets made now and again, might be fundamentally bigger contrasted with customary databases. This will be hard to deal with using conventional databases. Having said that, there were tremendous changes with respect to big data in 2019 and is said to grow and reach $247 billion by 2022.

Volume: The volume of information gathered is associations and organizations are enormous and originates from various sources like sensors, meter readings, business exchanges and from many other channels/Medium.

Velocity: Information is made at fast and must be dealt with and handled rapidly. Instruments like IoT gadgets, RFID labels, Smart meters, and others lead to computerized age of information at extraordinary speed.

Variety: Data comes in all formats. It can be in audio, video, numeric, text, email, satellite images, atmospheric sensors, and all the other available mediums.

As we know about the Vs and the information types, we have to see the methodologies engaged with information testing.

1. Data Validation

In order to ensure that the data is accurate, testers check the sources used to obtain data. They enter data into the Hadoop Distributed File System (HDFS) to validate the data.

This is where it is guaranteed, that the information gathered isn’t defiled. Also, This gathered information enters the Hadoop Distributed File System (HDFS) for approval. Here the information will be parceled and checked altogether in a bit by the bit approval process. For these means, the apparatuses utilized are a) Datameer, b) Talent and c) Informatica.

Essentially, it guarantees just the correct information enter the Hadoop Distributed File System (HDFS) area. After this progression is finished, the information is moved to the following phase of the Hadoop Testing Framework.

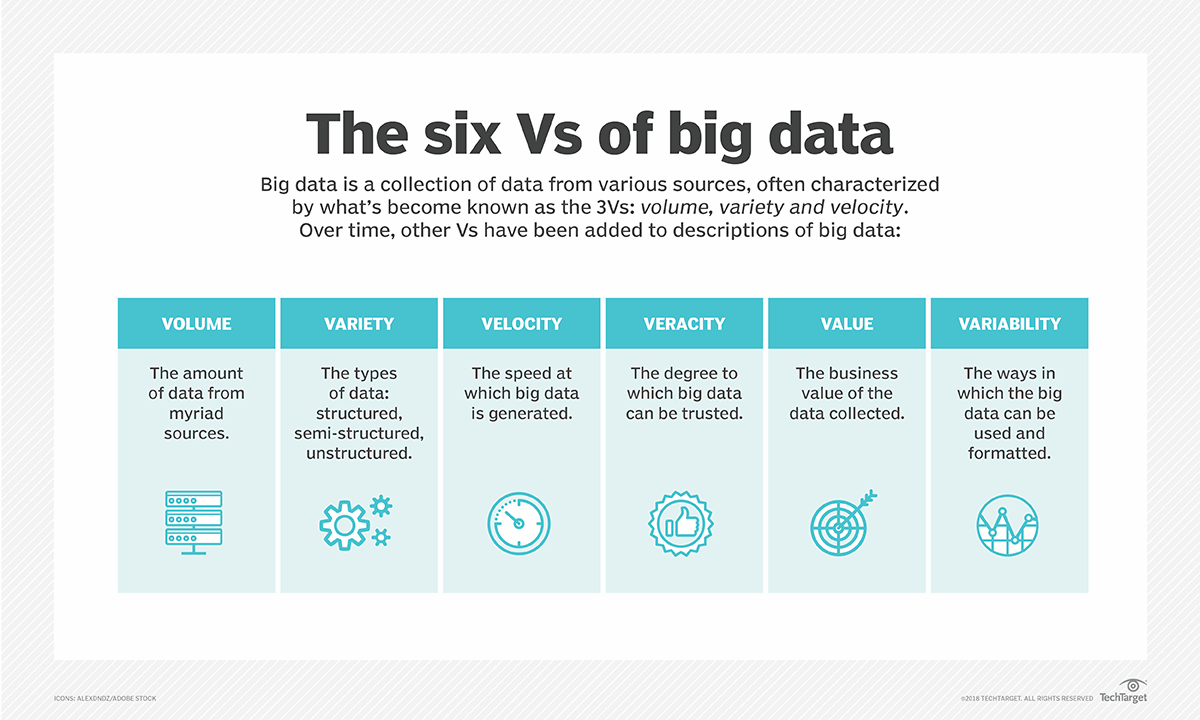

The Number of ‘Vs’ tends to change and grow in number as you learn more about Big Data.

The Number of ‘Vs’ tends to change and grow in number as you learn more about Big Data.

2. Process Validation

After matching the data and its sources, it will be pushed to the right location. Testers verify the business logic node by node and verify it against different nodes.

This progression is called Business Logic approval or Process Validation. In this progression, the analyzer will check for the business rationale for various hubs at each hub point. The instruments utilized in Map Reduce. The analyzer needs to confirm the procedure and the key-esteem pair age. Simply after this progression, information approval is viewed as complete. Information is approved after the Map-Reduce process.

After matching the data and its sources, it will be pushed to the right location. Testers verify the business logic node by node and verify it against different nodes.

There are also 6 ‘Vs’. The more you learn about Big Data, the more there is to learn.

There are also 6 ‘Vs’. The more you learn about Big Data, the more there is to learn.

3. Output Validation

In this stage, the data generated is into the downstream systems. Testers send data to the repository where the data can be further processed or analyzed.

The information is stacked downstream to check bends assuming any, in the information. The yield documents which are made in the process are moved to the EDW (Enterprise Data Warehouse). Just, it checks for information debasement.

Uses of Big Data in Real life

In this time where each part of our everyday life is device arranged, there is an immense volume of information that has been exuding from different computerized sources.

Obviously, we have confronted plenty of difficulties in the examination and investigation of such a gigantic volume of information with the customary information handling devices. To beat these difficulties, some large information arrangements were presented, for example, Hadoop. These huge information instruments truly understood the uses of large information.

An ever-increasing number of associations, both of all shapes and sizes, are utilizing from the advantages gave by enormous information applications. Organizations find that these advantages can assist them in developing quickly.

Big Data in Education Industry

The education industry is flooding with enormous measures of information identified with understudies, workforce, courses, results, and so forth. Presently, we have understood that legitimate examination and investigation of this information can give experiences that can be utilized to improve the operational adequacy and working of instructive foundations.

Following are a portion of the fields in the Education business that have been changed by enormous information propelled changes:

Customized Learning Programs

Modified projects and plans to profit singular undergraduates can be made utilizing the information gathered on the bases of every undergraduate’s learning history. This improves the general student results.

Restructure Course Material

Reframing the course material as per the information that is gathered based on what an undergraduate realizes, and to what degree by constant checking of the parts of a course is useful.

Career Prediction

Suitable examination and investigation of each undergraduate’s records will help see every undergraduate’s advancement, qualities, shortcomings, premiums, and that’s only the tip of the iceberg. It would likewise help in figuring out which vocation would be the most appropriate for the students in the future.

The utilization of large information has given an answer for probably the greatest trap in the training framework, that is, the one-size-fits-all style of scholastic set-up, by contributing in e-learning arrangements.

Example:

The University of Alabama has in excess of 38,000 undergraduates and a sea of information. In the past when there were no genuine answers for a break down of that amount of information, some of them appeared to be pointless. Presently, directors can utilize examination and information representations for this information to draw out examples of understudies altering the college’s tasks, enrollment, and maintenance endeavors.

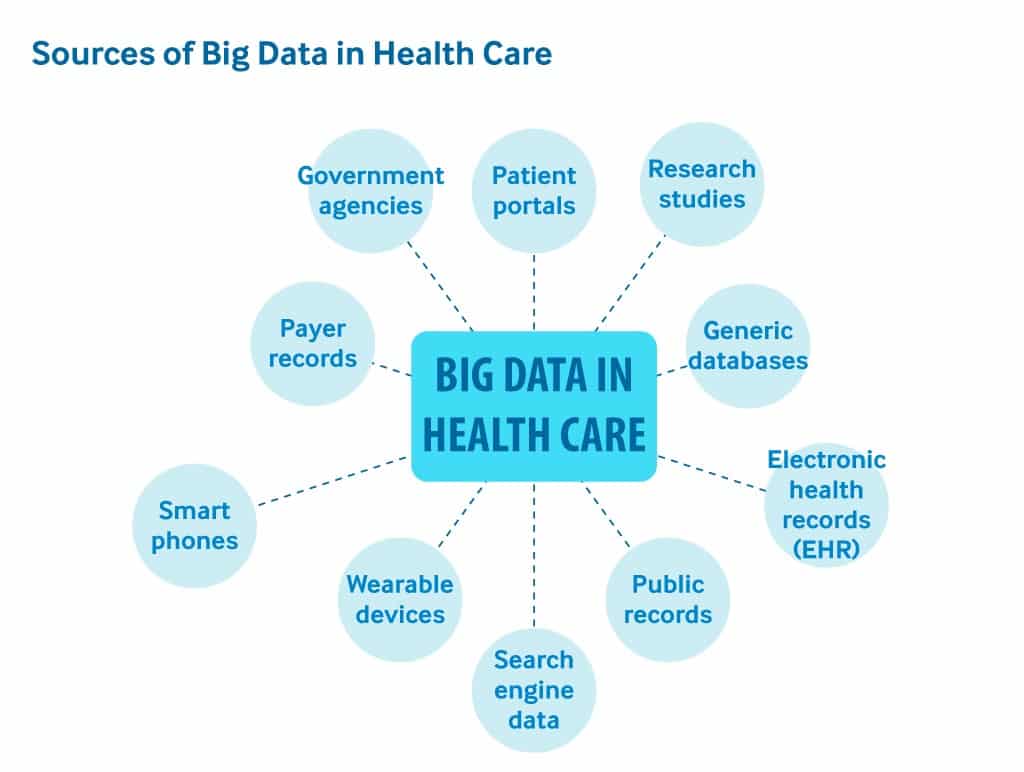

Big Data in Healthcare Industry

Medicinal services are one more industry that will undoubtedly create a tremendous measure of information. Following are a portion of the manners by which large information has added to social insurance:

- Enormous information decreases the expenses of a treatment since there is fewer odds of performing superfluous conclusion.

- It helps in anticipating flare-ups of plagues and furthermore in choosing what preventive measures could be taken to limit the impacts of the equivalent.

- Also, maintains a strategic distance from preventable maladies by recognizing them at the start. It keeps them from deteriorating which, makes their treatment simple and viable.

- Patients can be given a proof-based drug which is recognized and recommended in the wake of doing research on past medicinal outcomes.

Example:

Wearable gadgets and sensors have been presented in the medicinal services industry which can give constant feed to the electronic well being of a patient. One such innovation is from Apple.

Also, Apple has thought of Apple HealthKit, CareKit, and ResearchKit. Also, The fundamental objective is to engage the iPhone clients to store and access their constant well recording on their telephones.

Big Data in Media and Entertainment Industry

With individuals approaching different computerized devices, the age of huge measure of information is inescapable and this is the fundamental driver of the ascent in large information in media and media outlets.

Other than this, web-based social networking stages are another manner by which a tremendous measure of information is being created. In spite of the fact that, organizations in the media and media outlet have understood the significance of this information, and they have had the option to profit by it for their development.

A portion of the advantages separated from huge information in the media and media outlet is given underneath:

- Foreseeing the interests of crowds

- Advanced or on-request booking of media streams in computerized media conveyance stages

- Getting bits of knowledge from client audits

- Successful focusing of the promotions

Example

Spotify, on-request music giving stage, utilizes Analytics, gathers information from every one of its clients around the world, and afterward utilizes the dissected information to give educated music proposals and recommendations to each individual client.

Also, Amazon Prime that offers, recordings, music and Kindle books in a one-stop-shop is likewise enthusiastic about utilizing enormous information.

So why is big data important in software quality assurance?

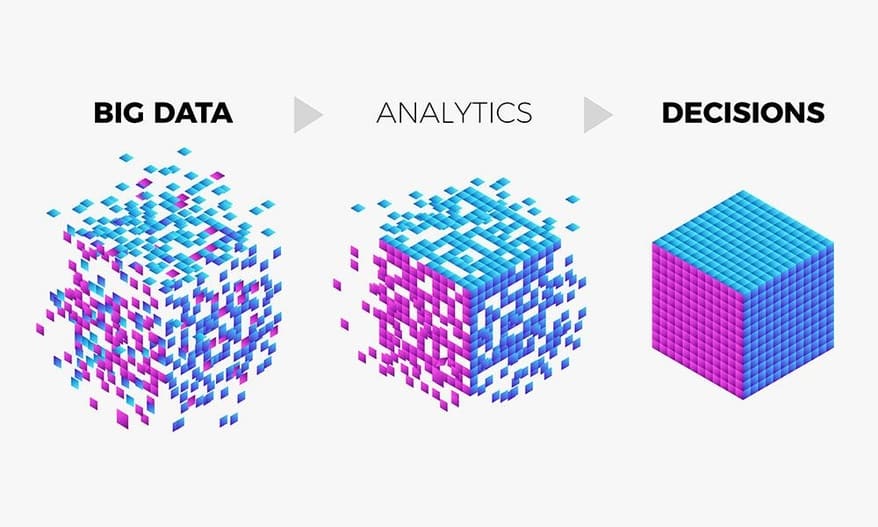

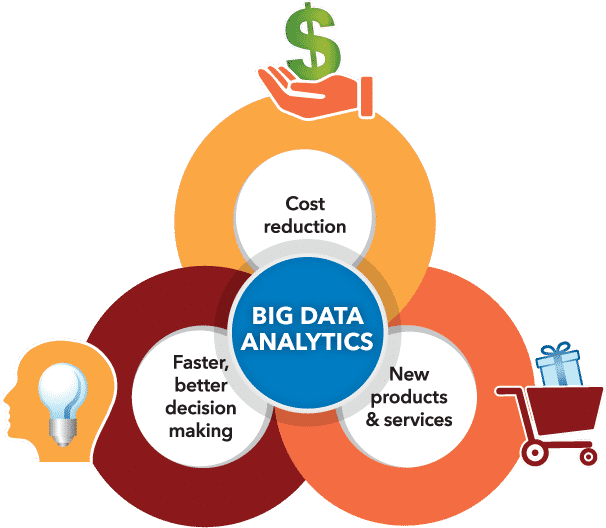

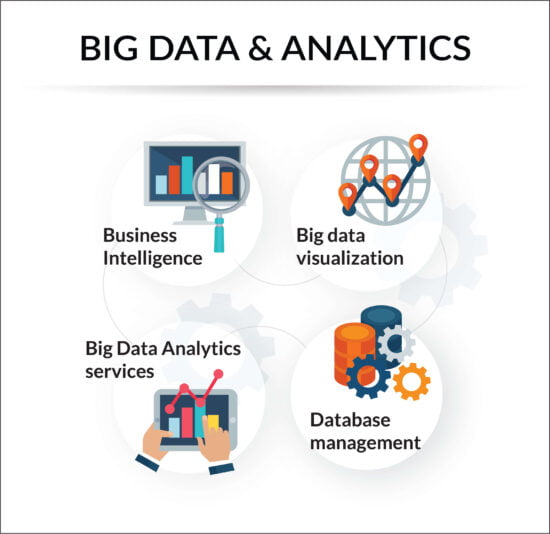

Also, Big data testing works on three basic levels that include information integration, data collection and deployment, and scalability. It is a testing type that revolves around volume, variety, and velocity of data which ensures quality and adds value for businesses by improving customer experience. In 2019, there was a boom in the big data industry. Testing big data applications requires a certain mindset, skillset and deep understanding of technologies. While performing big data testing, companies need to ensure that they take all measures to safeguard the confidentiality of the data. Big data analytics and testers support businesses to determine which strategy resonates with their customers. Also, It allows them to figure out how to improve software quality and provide their customers with refined data that support their business growth.

Conclusion

The current digital era comprises of more inter-connectedness among companies and customers, making data collection easier than ever before. Also, Customers produce huge amounts of data which can be fruitful for achieving business goals and objectives. With tremendous volumes of data availability, companies can utilize it to the fullest. The world is undergoing technological changes every now and then, which causes a lot of disruption in the business processes. Testing is a critical part of the quality assurance process and QA teams offer solutions for all issues that big data applications face before being certified with QA levels that match industry standards. Big data has evolved a great deal, with a number of smart devices for collecting and organizing data, and will continue to evolve as time goes on. Data is Power.

Citation:

- https://techblog.vn/big-data-testing-xu-huong-kiem-thu-nam-2019

- https://www.freepng.fr/png-8phc2n/

- https://www.igi-global.com/chapter/introduction-to-big-data-and-business-analytics/210975

- https://datafloq.com/read/big-data-analytics-paving-path-businesses-decision/6110

- http://www.cognosit.in/bigdata-hadoop.php

- https://searchcloudcomputing.techtarget.com/tip/Cost-implications-of-the-5-Vs-of-big-data

- http://ISTQBExamCertification.com/big-data-testing

- https://catalyst.nejm.org/doi/full/10.1056/CAT.18.0290

- https://www.bibrainia.com/the-future-of-big-data

Keep following us for more tech news! Check out our Social Media Pages

Was this helpful?

Last Modified: June 28, 2024 at 11:04 am

605 views