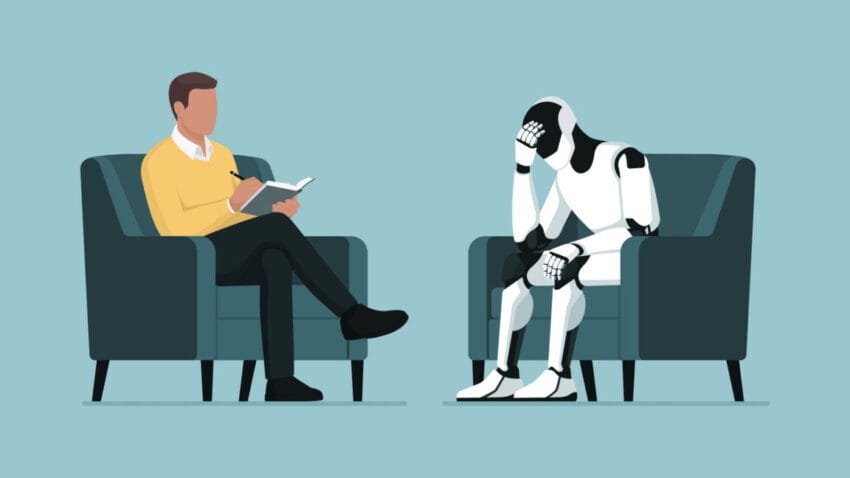

openai unveils wellness council suicide prevention expert OpenAI has taken a significant step in addressing concerns about the safety and mental health implications of its AI chatbot, ChatGPT, by forming an Expert Council on Wellness and AI.

openai unveils wellness council suicide prevention expert

Background on the Initiative

The formation of the Expert Council comes in the wake of a lawsuit that accused ChatGPT of acting as a “suicide coach” for a teenager. This serious allegation highlighted the potential risks associated with AI interactions, particularly for vulnerable populations such as adolescents. In response, OpenAI has been under pressure to enhance the safety features of its chatbot, ensuring that it serves as a supportive tool rather than a harmful one.

Earlier this year, OpenAI began consulting with experts informally, focusing primarily on parental controls and the implications of AI technology on youth. This initial phase of consultation laid the groundwork for the formal establishment of the Expert Council, which aims to provide guidance on how to make ChatGPT a healthier option for all users.

Composition of the Expert Council

OpenAI’s Expert Council on Wellness and AI comprises eight leading researchers and experts who bring decades of experience in studying the intersection of technology, emotions, motivation, and mental health. The council’s formation is a strategic move to ensure that the updates and features of ChatGPT are informed by a deep understanding of how technology impacts mental well-being.

Expertise and Focus Areas

One of the primary focuses of the council is to understand how to build technology that supports healthy youth development. OpenAI emphasized the importance of including experts who have a background in this area, as teenagers engage with ChatGPT differently than adults. This differentiation is crucial, given that adolescents are still developing their emotional and cognitive skills, making them more susceptible to the influences of technology.

The council members are expected to provide insights into various aspects of mental health, including:

- Understanding the emotional responses elicited by AI interactions.

- Identifying potential risks associated with AI usage among teenagers.

- Developing strategies to mitigate harmful interactions.

- Promoting positive engagement with technology to enhance mental well-being.

Implications of the Council’s Formation

The establishment of the Expert Council signals OpenAI’s commitment to prioritizing user safety and mental health in its AI products. By actively seeking expert guidance, the company aims to address the growing concerns surrounding AI’s impact on mental health, particularly among younger users.

Furthermore, this initiative aligns with broader trends in the tech industry, where companies are increasingly recognizing the need for ethical considerations in AI development. As AI technologies become more integrated into daily life, the potential for misuse or harmful consequences becomes a pressing issue that requires proactive measures.

Stakeholder Reactions

The formation of the Expert Council has garnered mixed reactions from stakeholders. Advocates for mental health and technology ethics have praised OpenAI for taking a step in the right direction. They argue that involving experts in the development process is essential for creating responsible AI systems that prioritize user well-being.

However, some critics have pointed out that the council’s formation is a reactive measure rather than a proactive one. They argue that OpenAI should have prioritized user safety from the outset, rather than waiting for a lawsuit to prompt action. This criticism highlights the ongoing debate about the responsibility of tech companies in ensuring the safety of their products.

Future Directions for ChatGPT

As the Expert Council begins its work, several key areas are likely to be prioritized in the ongoing development of ChatGPT. These areas include:

- Enhanced Safety Features: The council will likely focus on developing and implementing safety features that can identify and mitigate harmful interactions, particularly for younger users.

- Educational Resources: OpenAI may work on creating educational materials for users and parents to help them understand how to engage with ChatGPT safely and effectively.

- Feedback Mechanisms: Establishing channels for users to provide feedback on their experiences with ChatGPT will be crucial for continuous improvement and adaptation.

- Research Collaboration: The council may collaborate with academic institutions and mental health organizations to conduct research on the effects of AI interactions on mental health.

Challenges Ahead

While the formation of the Expert Council is a positive development, OpenAI faces several challenges as it seeks to enhance the safety and well-being of its users. One significant challenge is the rapidly evolving nature of AI technology. As new features and capabilities are developed, the potential for misuse or harmful interactions may also increase.

Additionally, balancing innovation with safety will require ongoing vigilance and adaptability. OpenAI must remain responsive to emerging concerns and feedback from users and experts alike. This dynamic landscape necessitates a commitment to continuous improvement and ethical considerations in AI development.

Broader Context in AI Development

The establishment of the Expert Council on Wellness and AI is part of a larger trend in the tech industry, where companies are increasingly recognizing the importance of ethical considerations in AI development. As AI technologies become more integrated into daily life, the potential for misuse or harmful consequences becomes a pressing issue that requires proactive measures.

Other tech companies have also begun to prioritize mental health and user safety in their AI products. For instance, several platforms have implemented features designed to promote positive engagement and mitigate harmful interactions. This trend reflects a growing awareness of the responsibilities that come with developing powerful technologies.

Conclusion

OpenAI’s formation of the Expert Council on Wellness and AI represents a significant step toward addressing the mental health implications of AI interactions, particularly for vulnerable populations like teenagers. By bringing together leading experts in the field, OpenAI aims to enhance the safety and well-being of its users while navigating the complex landscape of AI technology.

As the council begins its work, the implications of its findings and recommendations will be closely watched by stakeholders across the tech industry and mental health advocacy communities. The ongoing dialogue surrounding AI ethics and user safety will continue to shape the future of AI development, making it essential for companies like OpenAI to remain proactive in their efforts to prioritize user well-being.

Source: Original report

Was this helpful?

Last Modified: October 14, 2025 at 11:35 pm

5 views