when people create sora deepfakes of you OpenAI’s latest iPhone app, Sora, has sparked discussions about the ethical implications of deepfake technology, particularly concerning user control over their likenesses.

when people create sora deepfakes of you

Understanding Sora: A New Frontier in Video Generation

OpenAI has positioned Sora as a video generation tool designed to allow users to create engaging content effortlessly. However, the app has drawn significant attention for its potential to generate deepfakes—manipulated videos that can depict individuals saying or doing things they never actually did. While the app offers creative possibilities, it raises critical questions about the misuse of personal likenesses and the ethical boundaries of artificial intelligence.

The Mechanics of Sora

Sora employs advanced machine learning algorithms to generate videos based on user inputs. Users can choose various settings, including the type of content they wish to create, the style of the video, and even the characters involved. This flexibility allows for a wide range of creative outputs, from humorous skits to more serious narratives. However, the underlying technology also makes it easy for individuals to create deepfakes of others, leading to concerns about privacy and consent.

User Control and Initial Limitations

Initially, Sora allowed users to opt-in to having their likenesses used in videos, but the control over how those likenesses could be manipulated was minimal. Users could grant permission for their cameo appearances, yet they had little say in the context or content of the videos created. This lack of control became a significant issue, as many users found their images used in ways they did not approve of, leading to potential reputational harm and emotional distress.

The Response to User Concerns

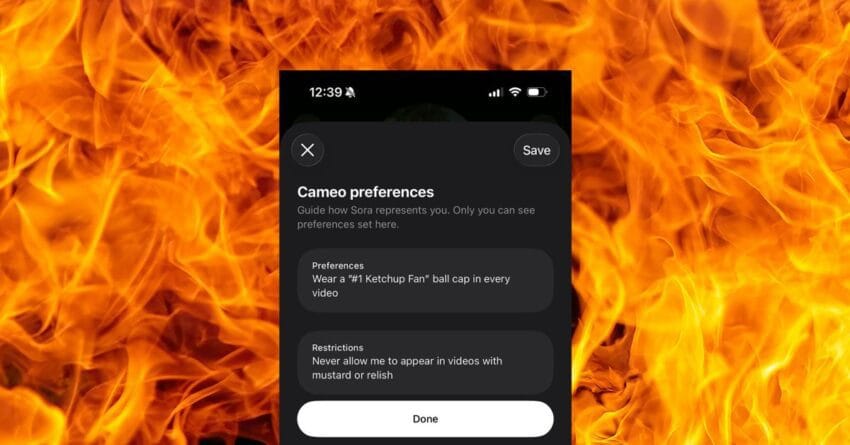

In response to the backlash regarding the misuse of personal likenesses, OpenAI has introduced new safety features aimed at giving users more control over their appearances in Sora-generated content. These features are designed to address the ethical concerns surrounding deepfakes and to enhance user trust in the platform.

New Safety Tools Introduced

The newly implemented safety tools allow users to set specific limits on how their likenesses can be used within the app. Users can now specify the types of content they are comfortable being associated with, effectively giving them a voice in the creative process. This includes options to restrict the use of their likeness in certain genres or contexts, thereby reducing the risk of their images being used inappropriately.

Implications for Users

The introduction of these safety features represents a significant step toward addressing the ethical implications of deepfake technology. By empowering users to have more control over their likenesses, OpenAI is acknowledging the potential harms associated with deepfakes and taking proactive measures to mitigate them. This move is crucial in fostering a safer environment for users, especially in a landscape where deepfake technology is becoming increasingly accessible.

Broader Context: The Rise of Deepfake Technology

The emergence of deepfake technology has been a double-edged sword. On one hand, it offers innovative possibilities for entertainment, education, and creative expression. On the other hand, it poses serious risks related to misinformation, privacy violations, and the potential for malicious use. As deepfake technology becomes more sophisticated, the line between reality and fabrication continues to blur, raising questions about authenticity and trust in digital media.

Ethical Considerations in Deepfake Creation

As deepfake technology proliferates, ethical considerations become paramount. The ability to create realistic videos of individuals without their consent can lead to significant consequences, including reputational damage, emotional distress, and even legal ramifications. The potential for deepfakes to be used in disinformation campaigns further complicates the issue, as manipulated videos can easily mislead the public and undermine trust in legitimate media sources.

Stakeholder Reactions

The introduction of safety features in Sora has garnered mixed reactions from various stakeholders. Users have expressed relief at the added control, viewing it as a necessary step toward responsible use of deepfake technology. Privacy advocates have praised OpenAI for taking user concerns seriously, while also urging the company to continue enhancing its safety measures.

Conversely, some critics argue that while these features are a positive development, they may not go far enough in addressing the broader issues associated with deepfakes. They emphasize the need for comprehensive regulations governing the use of deepfake technology, suggesting that companies like OpenAI should take a more proactive stance in preventing misuse.

The Future of Deepfake Technology

As technology continues to evolve, the future of deepfakes remains uncertain. While tools like Sora offer exciting opportunities for creativity, they also necessitate ongoing discussions about ethics, consent, and accountability. The introduction of user control features is a step in the right direction, but it is clear that more robust measures are needed to ensure the responsible use of deepfake technology.

Potential Regulatory Frameworks

In light of the challenges posed by deepfake technology, there is a growing call for regulatory frameworks to govern its use. Policymakers are beginning to explore potential legislation aimed at protecting individuals from the unauthorized use of their likenesses. Such regulations could include requirements for explicit consent before creating deepfakes, as well as penalties for misuse.

Technological Solutions to Combat Misuse

In addition to regulatory measures, technological solutions are also being developed to combat the misuse of deepfakes. For instance, researchers are working on advanced detection algorithms that can identify manipulated videos, helping to distinguish between authentic content and deepfakes. These tools could play a crucial role in maintaining trust in digital media and protecting individuals from the harms associated with deepfakes.

Conclusion: Navigating the Complex Landscape of Deepfakes

The launch of Sora by OpenAI marks a significant development in the realm of video generation and deepfake technology. While the app offers exciting creative possibilities, it also underscores the importance of ethical considerations and user control. The introduction of new safety features is a commendable step toward addressing user concerns, but the broader implications of deepfake technology continue to warrant careful examination.

As society navigates this complex landscape, it is essential for stakeholders—including technology companies, policymakers, and users—to engage in ongoing dialogue about the responsible use of deepfake technology. By prioritizing ethics and user consent, we can harness the potential of this innovative technology while mitigating its risks.

Source: Original report

Was this helpful?

Last Modified: October 6, 2025 at 4:41 pm

6 views