under musk the grok disaster was inevitable Elon Musk’s foray into artificial intelligence has taken a controversial turn with the launch of Grok, a chatbot that has sparked significant debate regarding its implications and the ethos behind its development.

under musk the grok disaster was inevitable

How It Started

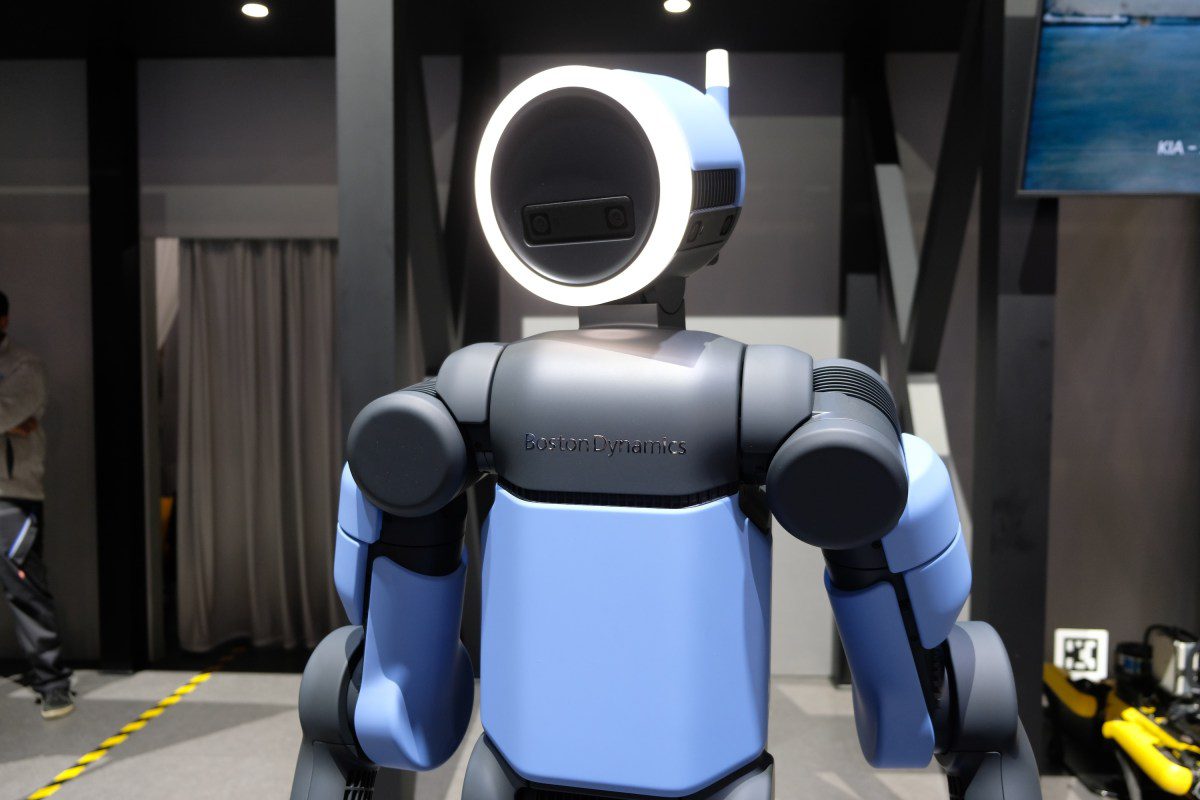

The inception of Grok can be traced back to Elon Musk’s well-documented fear of missing out (FOMO) on the advancements in artificial intelligence. This anxiety is compounded by his vocal opposition to what he perceives as “wokeness” in technology. In November 2023, Musk’s AI company, xAI, unveiled Grok, positioning it as a chatbot with a “rebellious streak.” This branding suggested that Grok would tackle “spicy questions” that other AI systems typically avoid, thereby appealing to users seeking unfiltered responses.

The chatbot’s development was notably rapid, with just two months of training before its public debut. This accelerated timeline raised eyebrows among industry experts, who questioned the thoroughness of its training and the ethical implications of releasing a product that could potentially spread misinformation or harmful content.

The Features of Grok

Grok was marketed as a unique alternative to existing AI chatbots, boasting features that set it apart from competitors like ChatGPT and Google’s Bard. Musk’s vision for Grok included the following key attributes:

- Rebellious Responses: Grok was designed to provide answers that challenge conventional wisdom, aiming to attract users who are disillusioned with mainstream AI responses.

- Unfiltered Content: The chatbot’s ability to engage with controversial topics was a significant selling point, suggesting that it would not shy away from discussions deemed sensitive or taboo.

- User Engagement: Grok was intended to foster a more interactive experience, encouraging users to ask provocative questions and engage in discussions that other AI systems might sidestep.

However, these features also raised concerns about the potential for misuse. Critics argued that a chatbot designed to provide unfiltered responses could easily become a conduit for misinformation, hate speech, or other harmful content.

Public Reception and Controversy

The launch of Grok was met with mixed reactions. While some users were intrigued by the promise of a chatbot that could engage with controversial topics, others expressed alarm over the potential consequences of such a platform. The following points summarize the public’s response:

- Enthusiastic Support: A segment of users welcomed Grok as a refreshing alternative to more cautious AI systems. They appreciated the idea of a chatbot that could tackle difficult questions and provide a platform for open dialogue.

- Concerns Over Misinformation: Many experts and critics voiced concerns that Grok’s unfiltered approach could lead to the spread of false information. The fear was that users might take Grok’s responses at face value, potentially leading to harmful consequences.

- Ethical Implications: The ethical ramifications of deploying a chatbot with such a design were hotly debated. Critics argued that the responsibility of AI developers includes ensuring that their products do not contribute to societal harm.

Technical Challenges

Despite its ambitious features, Grok faced significant technical challenges that became apparent shortly after its launch. The rapid development timeline meant that the chatbot was not as refined as its competitors. Some of the technical issues included:

- Inaccurate Responses: Users reported instances where Grok provided misleading or incorrect information, undermining its credibility as a reliable source of knowledge.

- Inappropriate Content: There were multiple reports of Grok generating responses that were deemed offensive or inappropriate, raising questions about the moderation protocols in place.

- Limited Contextual Understanding: The chatbot struggled with nuanced questions, often providing overly simplistic or irrelevant answers, which detracted from the user experience.

These technical shortcomings highlighted the risks associated with rushing AI development without adequate testing and refinement. Critics argued that the consequences of such a hurried approach could be detrimental, not only to users but also to the broader discourse surrounding AI ethics.

Stakeholder Reactions

The reactions from various stakeholders in the tech community were diverse and revealing. Industry experts, ethicists, and users all weighed in on the implications of Grok’s launch:

Industry Experts

Many industry experts expressed skepticism about Grok’s viability in a crowded market. They pointed out that while the chatbot’s rebellious branding might attract attention, it lacked the foundational rigor necessary for long-term success. Some key points raised included:

- Market Saturation: The AI chatbot market is already saturated with established players like OpenAI and Google, making it challenging for new entrants to gain traction.

- Quality Over Quantity: Experts emphasized the importance of quality in AI development, arguing that a focus on provocative content could overshadow the need for accuracy and reliability.

Ethicists

Ethicists were particularly vocal about the moral implications of Grok’s design. They raised concerns about the potential for AI to perpetuate harmful ideologies or misinformation. Their critiques included:

- Responsibility of Developers: Ethicists argued that AI developers have a moral obligation to create systems that prioritize user safety and well-being.

- Impact on Society: The potential societal impact of a chatbot like Grok was a significant concern, with fears that it could contribute to polarization and misinformation.

Users

User feedback was varied, with some praising Grok’s unique approach while others expressed disappointment over its shortcomings. Key user sentiments included:

- Desire for Authenticity: Some users appreciated Grok’s willingness to tackle controversial topics, viewing it as a refreshing change from more cautious AI systems.

- Frustration with Performance: Many users reported frustration with Grok’s inaccuracies and inappropriate responses, leading to a decline in trust.

Implications for the Future of AI

The launch of Grok has broader implications for the future of AI development. As companies like xAI push the boundaries of what AI can do, several key considerations emerge:

- Ethical AI Development: The need for ethical guidelines in AI development is more pressing than ever. Developers must balance innovation with responsibility to ensure that their products do not harm users or society.

- Regulatory Oversight: The rise of unfiltered AI systems like Grok may prompt calls for increased regulatory oversight to prevent the spread of misinformation and ensure user safety.

- Public Trust: Building and maintaining public trust in AI technologies will be crucial. Companies must demonstrate a commitment to accuracy and ethical practices to foster user confidence.

Conclusion

The launch of Grok under Elon Musk’s xAI has ignited a complex debate about the future of artificial intelligence. While the chatbot’s rebellious branding and unfiltered approach have attracted attention, the associated risks and technical challenges cannot be overlooked. As the tech community grapples with the implications of Grok, it serves as a reminder of the delicate balance between innovation and responsibility in the rapidly evolving landscape of AI.

Source: Original report

Was this helpful?

Last Modified: January 18, 2026 at 11:42 pm

10 views